AWS Architecture Blog

Building Real Time AI with AWS Fargate

This post is a contribution from AWS customer, Veritone. It was originally published on the company’s Website.

Here at Veritone, we deal with a lot of data. Our product uses the power of cognitive computing to analyze and interpret the contents of structured and unstructured data, particularly audio and video. We use cognitive computing to provide valuable insights to our customers.

Our platform is designed to ingest audio, video and other types of data via a series of batch processes (called “engines”) that process the media and attach some sort of output to it, such as transcripts or facial recognition data.

Our goal was to design a data pipeline that could process streaming audio, video, or other content from sources, such as IP cameras, mobile devices, and structured data feeds in real-time, through an open ecosystem of cognitive engines. This enables support for customer use cases like real-time transcription for live-broadcast TV and radio, face and object detection for public safety applications, and the real-time analysis of social media for harmful content.

Why AWS Fargate?

We leverage Docker containers as the deployment artifact of both our internal services and cognitive engines. This gave us the flexibility to deploy and execute services in a reliable and portable way. Fargate on AWS turned out to be a perfect tool for orchestrating the dynamic nature of our deployments.

Fargate allows us to quickly scale Docker-based engines from zero to any desired number without having to worry about pre-provisioning capacity or bootstrapping and managing EC2 instances. We use Fargate both as a backend for quickly starting engine containers on demand and for the orchestration of services that need to always be running. It enables us to handle sudden bursts of real-time workloads with a consistent launch time. Fargate also allows our developers to get near-immediate feedback on deployments without having to manage any infrastructure or deal with downtime. The integration with Fargate makes this super simple.

Moving to Real Time

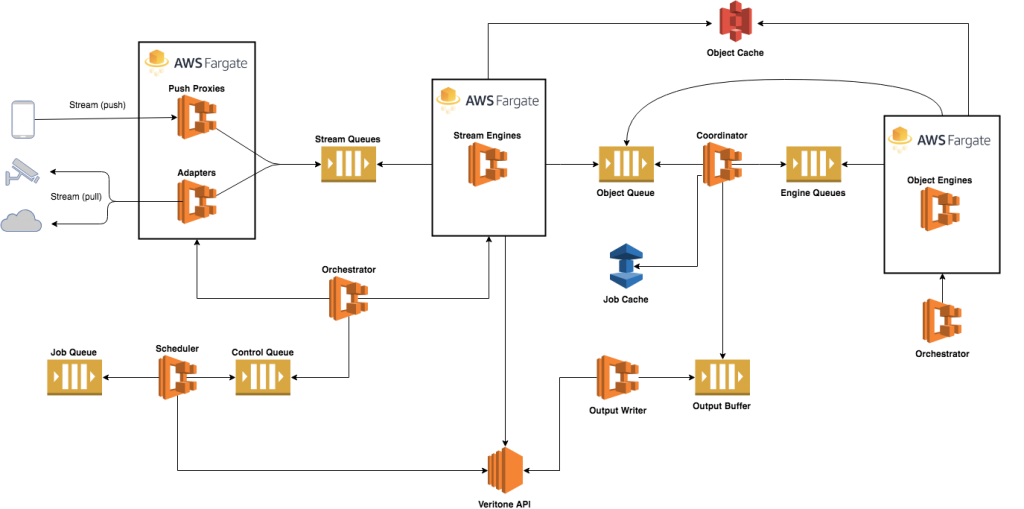

We designed a solution (shown below), in which media from a source, such as a mobile app, which “pushes” streams into our platform, or an IP camera feed, which is “pulled”, is streamed through a series of containerized engines, processing the data as it is ingested. Some engines, which we refer to as Stream Engines, work on raw media streams from start to finish. For all others, streams are decomposed into a series of objects, such as video frames or small audio/video chunks that can be processed in parallel by what we call Object Engines. An output stream of results from each engine in the pipeline is relayed back to our core platform or customer-facing applications via Veritone’s APIs.

Message queues placed between the components facilitate the flow of stream data, objects, and events through the data pipeline. For that, we defined a number of message formats. We decided to use Apache Kafka, a streaming message platform, as the message bus between these components.

Kafka gives us the ability to:

- Guarantee that a consumer receives an entire stream of messages, in sequence.

- Buffer streams and have consumers process streams at their own pace.

- Determine “lag” of engine queues.

- Distribute workload across engine groups, by utilizing partitions.

The flow of stream data and the lifecycle of the engines is managed and coordinated by a number of microservices written in Go. These include the Scheduler, Coordinator, and Engine Orchestrators.

Deployment and Orchestration

For processing real-time data, such as streaming video from a mobile device, we required the flexibility to deploy dynamic container configurations and often define new services (engines) on the fly. Stream Engines need to be launched on-demand to handle an incoming stream. Object Engines, on the other hand, are brought up and torn down in response to the amount of pending work in their respective queues.

EC2 instances typically require provisioning to be done in anticipation of incoming load and generally take too long to start in this case. We needed a way to quickly scale Docker containers on demand, and Fargate made this achievable with very little effort.

In Closing

Fargate helped us solve a lot of problems related to real-time processing, including the reduction of operational overhead, for this dynamic environment. We expect it to continue to grow and mature as a service. Some features we would like to see in the near future include GPU support for our GPU-based AI Engines and the ability to cache container images that are larger for quicker “warm” launch times.

About Veritone

Veritone created the world’s first operating system for Artificial Intelligence. Veritone’s aiWARE operating system unlocks the power of cognitive computing to transform and analyze audio, video and other data sources in an automated manner to generate actionable insights. The Veritone platform provides customers ease, speed and accuracy at low cost.

The Veritone authors are Christopher Stobie – cstobie@veritone.com and Mezzi Sotoodeh – msotoodeh@veritone.com